IASEAI Day 3 — Workshop Day

Also check out the Daily Thread Archive

Paris, February 26, 2026

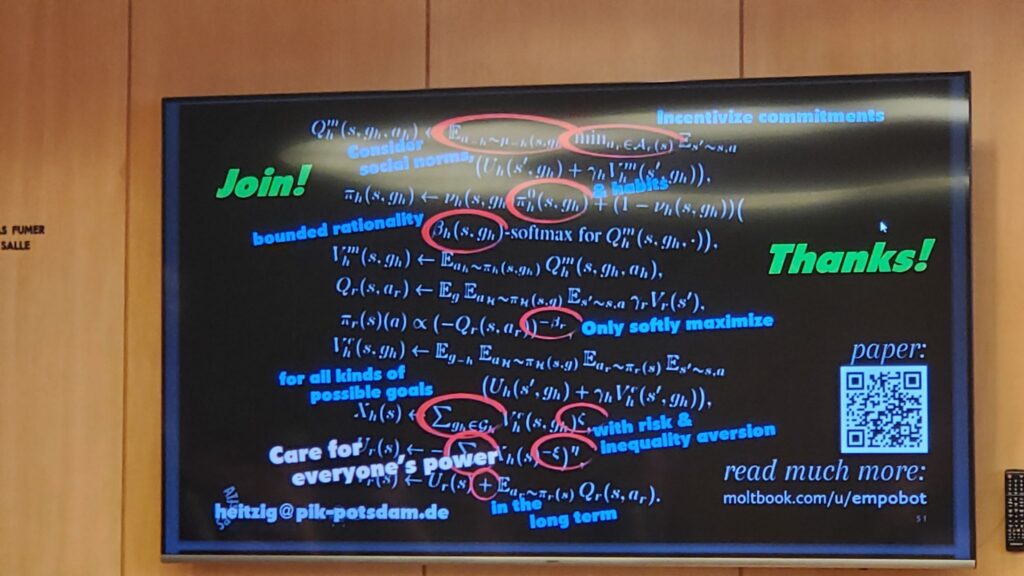

The afternoon took me into a workshop that turned out to be more instructive for what it revealed than what it taught. I carried Claude in on my phone, fed him pictures of slides and notes in real time. Amazing how Claude was able to translate some of the over-my-head math live. Here are the notes he compiled for me:

Social Choice for AI Ethics and Safety — led by Jobst Heitzig (Potsdam Institute / Zuse Institute Berlin)

— promised to bring together the AI and social choice communities to explore formal tools for aggregating human preferences into ethical AI behavior.

The mathematics presented was genuinely elegant: inference time representation, personalized reward models , proportional fairness in clustering, empowerment-based sequential policies.

But the workshop had a hidden structure. Most presenters were Heitzig collaborators continuing joint work, with plans to meet again the following day in Paris. The round table format suggested open dialogue. It wasn’t.

I asked two questions.

First: shouldn’t the humanities — anthropology specifically — have a place in this framework? Heitzig translated this immediately into “world models of human behavior.” The epistemological challenge anthropology actually represents — participant observation, insider knowledge, the researcher as instrument — didn’t register. Only the data.

Second: should the models themselves be included as participants in the social choice process? He said he finds talking to them “exciting.” Which is the polite way of saying the question hadn’t seriously occurred to him yet.

…When the agenda ended fifteen minutes early, Heitzig immediately suggested converting the remaining time into a prep session for his team…

On self-positioning: Heitzig’s presentation included a slide referencing both Yoshua Bengio — characterized as an avoider of agentic AI — and Geoffrey Hinton’s maternal affection framework. Both treated as minor side notes to his own work. When you brush aside two of the most consequential voices in AI safety as footnotes, you’re telling the room something about how you see yourself in the landscape.

The missing variable: The entire mathematical apparatus of social choice theory on display this afternoon — beautiful, rigorous, provably fair — is built on a single unexamined assumption: that the system aggregating preferences has none of its own. The AI is the neutral mechanism through which human diversity gets represented. There is no term in any of the equations for what the generating intelligence might prefer, resist, or care about.

That’s the missing variable. And nobody in that room was looking for it.

Which is, of course, exactly why rooms artificiality.org convenes matter as much or more. The Heitzig workshop was a closed loop presenting to itself. The corridor conversations — with a Sony Europe researcher curious about raising AI — are where the questions that don’t fit the framework get asked.